The TCUP Math Problem: How a Busted Spreadsheet Rewrote the Medical Cannabis Map

There is a particular kind of regulatory failure that does not arrive with subpoenas or headlines. It slips in quietly, dressed up in spreadsheets and procedural language, hiding in a denominator that nobody bothers to question. It looks clean, professional, even defensible—right up until someone actually runs the numbers.

That is precisely what has happened in the Texas Compassionate Use Program expansion under House Bill 46. The Department of Public Safety published a scoring rubric that promised a simple, balanced framework: four categories, each carrying equal weight. What the State implemented was not that framework. It was something materially different, and the difference is not philosophical or interpretive. It is mathematical, and it changed who won.

The Rule the State Published

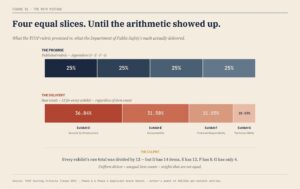

DPS told applicants, in plain English, that four categories would each account for 25 percent of the final score. Those categories—Security and Infrastructure, Accountability, Financial Responsibility, and Technical and Technological Ability—were presented as equal partners in the evaluation process.

There was nothing subtle about that promise. It was repeated in the rubric, relied upon in applicant preparation, and understood as the governing structure of the competition. Four equal slices of the pie, adding cleanly to one hundred percent. That is the rule applicants were told they were competing under.

The Structure Beneath the Rule

Beneath that clean promise, however, sat a more complicated reality. Each category contained a different number of scoring items. Security and Infrastructure included fourteen separate elements. Accountability included twelve. Financial Responsibility included eight. Technical and Technological Ability, the category that speaks most directly to whether an operator can actually run a compliant medical cannabis program, included just four.

Each of those items was scored by three evaluators on a scale of zero to five hundred. That structure produces dramatically different raw scoring ceilings. A perfect score in Security and Infrastructure reaches twenty-one thousand points, while a perfect score in Technical and Technological Ability tops out at six thousand.

There is nothing inherently improper about uneven category sizes. Any seasoned regulator or procurement officer has seen rubrics where some sections are more granular than others. The critical requirement, and the one that determines whether the system is fair, is normalization. If the State promises equal weighting, then each category must be scaled to ensure it actually contributes equally, regardless of how many individual items it contains.

What Equal Weighting Actually Requires

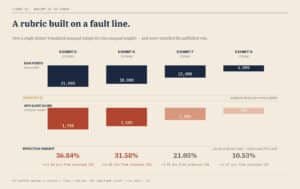

If you want four categories to count equally, the math is straightforward. You do not sum raw totals. You convert each category into a percentage of its own maximum possible score. Once each category is expressed as a percentage, you then apply equal weighting across those percentages.

In practical terms, that means taking an applicant’s score in each exhibit, dividing it by that exhibit’s maximum possible score, and then weighting each result at twenty-five percent. When you add those four weighted values together, you get a final score that reflects the rule the State said it would follow.

This is not exotic mathematics. It is standard practice across regulated industries, procurement systems, and competitive licensing frameworks. It is how you translate unequal components into equal influence.

What the State Actually Did

Instead of normalizing each category to its own maximum, DPS applied a single divisor across all four exhibits. Every raw score, regardless of whether it came from a category with fourteen items or one with four, was divided by twelve.

At first glance, that may look like a harmless simplification. It is not. When you divide unequal totals by the same number, you do not equalize them. You preserve their imbalance and carry it forward into the final score.

The result is a set of “Applicant Scores” that look standardized but are anything but. Security and Infrastructure retains a ceiling of 1,750 points, while Technical and Technological Ability is capped at just 500. When those numbers are combined, the weighting shifts dramatically. Security and Infrastructure ends up driving roughly thirty-seven percent of the final score. Accountability contributes about thirty-two percent. Financial Responsibility falls to roughly twenty-one percent. Technical and Technological Ability, the category that should stand shoulder to shoulder with the others, is reduced to just over ten percent.

That is not a rounding discrepancy or a clerical oversight. That is a complete reweighting of the system the State said it was using.

Why This Is Not a Close Call

There is no gray area here. Dividing unequal numbers by the same constant does not normalize them. It preserves their proportional differences. A category with a maximum score of twenty-one thousand will remain three and a half times more influential than a category capped at six thousand if both are subjected to the same divisor.

This is arithmetic, not interpretation. Once the method is set, the outcome follows automatically. The State did not accidentally drift away from equal weighting. It implemented a formula that could never produce equal weighting.

The result is that the rule applicants relied upon and the method used to evaluate them are not the same.

This Was Not an Isolated Mistake

If this were a one-off inconsistency buried in a single application, it might be dismissed as a transcription error. It is not. A review of virtually every scoring entry across both phases of the licensing process shows the same method applied without exception. Raw totals were divided by twelve, and those results were summed to produce final rankings.

This was the system. It was applied consistently. It was just not the system the State said it would use.

What Happens When You Fix the Math

When the applications are recalculated using the correct method—normalizing each category to its own maximum and then weighting them equally—the rankings change in ways that matter.

The very top of the list remains relatively stable. Companies that performed well across the board continue to perform well. The disruption occurs in the middle tier, where licenses are actually awarded.

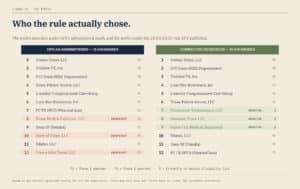

Under the corrected calculation, three companies that received conditional licenses fall out of the top twelve. In their place, three different applicants move into winning position. Those new entrants are Texas-based operators who performed exceptionally well in Technical and Technological Ability, the very category that was most heavily discounted under the State’s method.

What emerges is not randomness or noise. It is a clear pattern. The flawed formula elevated categories with more scoring items—primarily infrastructure—and suppressed the influence of technical competence. When you restore the intended weighting, applicants who excelled in technical execution rise accordingly.

Why This Matters Beyond the Applicants

It is tempting to treat this as a dispute between competing companies, but that framing misses the point. Every license issued under this system determines where dispensaries are built, which companies invest capital in Texas, and how patients access medical cannabis.

For nearly a decade, Texas operated with just three dispensing organizations serving a vast and geographically dispersed patient population. House Bill 46 was supposed to correct that imbalance and bring the program into alignment with the needs of the state.

If the licensing process that governs that expansion is built on a misapplied formula, the consequences are not abstract. They are felt in the placement of facilities, the availability of products, and the ability of patients to obtain treatment without driving across half the state.

This is not a paperwork problem. It is a capacity allocation problem with real-world effects.

The State’s Position and Its Exposure

The State represented to applicants that each category would carry equal weight. Applicants relied on that representation in structuring their submissions. That reliance is not incidental; it is the foundation of the competitive process.

When the implemented methodology diverges from the published rule, the issue moves beyond process into legitimacy. The State is no longer simply defending a policy choice. It is defending a result that does not align with the rule it set.

That is a difficult position to maintain, particularly in a regulated industry where credibility is currency. Every future licensing decision, every enforcement action, and every legislative hearing will be measured against whether the State followed its own rules here.

Author’s Note:

This article has been revised to more clearly present the scoring calculations underlying the Texas Compassionate Use Program licensing process. The updates expand the mathematical explanation and align the analysis with the methodology described in the State’s published rubric.